Creative AI Use Cases - Apr 16, 2026

How to organize your second brain, custom datasets, clinical trial predictions and two new benchmarks

Flat Circle tracks creative uses of LLMs in hedge funds. Join hundreds of PMs, analysts and engineers reading each week:

Custom datasets you can build with Claude Cowork

Flag discrepancies between earnings press releases and transcripts (InfoArb)

Yale finance professor creates a custom dataset of tariff exposure (Paul Goldsmith-Pinkham)

Extract supplier geographies from a 10K filing, determine transit corridors and query AIS vessel-tracking databases and ICEGATE customs registries to audit and monitor supply chains (Alan Shore)

Analyze webcam footage of a fab construction site, counting building levels based on crane heights, to test consensus for WFE spending (@lfg_cap)

Official Claude for Financial Services plugin, including skills like catalyst-calendar, model-update, morning-note and idea-generation (GitHub). Related, a trader published skills around market analysis, technical charting, economic calendars and screeners (GitHub)

How to organize your second brain

Lot of discussion this week re: building your own personal knowledge base - a system of markdown files containing your investment philosophy, research, patterns, skills, notes, etc - enabling your Claude Cowork or OpenClaw to deliver exactly what you need.

It’s all about how you organize it. Models perform worse if you feed it too much information, so you want to arrange your knowledge base such that your system pulls in exactly the context it needs to make a given decision, while not missing anything important nor diluting itself with unnecessary info.

A few approaches:

OpenAI cofounder Andrej Karpathy published LLM Wiki, which organizes your knowledge into a personal wikipedia, with agents that maintain and crosslink across concept pages. Focus is getting your system to reflect your views on a given company, sector or theme (@karpathy, GitHub).

CEO of YCombinator published GBrain, a framework optimized for systems like OpenClaw where the focus is performing as many different actions as possible (GitHub)

The 5th Element star Milla Jovovich published MemPalace, a framework optimized for retrieval of verbatim documents (GitHub)

A former sellsider recommends pulling in four main concepts for each decision: (i) the most important events, (ii) the most recent events, (iii) retrieved related events, (iv) curated long term memories. This attempts to model how the human brain compiles knowledge to make a decision (@HenryChien4)

My take: the right organizational scheme depends on what you’re optimizing for and your ability to catch mistakes. GBrain is great for personal AI assistants. LLM Wiki best reflects your views and experience. MemPalace optimizes for accurately returning the right source documents. The more gold standard examples you can test against, the easier it is to experiment with organizational schemes.

New papers and benchmarks

New paper on “LLM herding” shows including buy/sell ratings significantly influences model responses, even if subsequent analysis contradicts the rating.

My take: For investor agents, sellside research can be a prompt injection: managing what insights are fed to the decision models is critical. This can be a problem when you enable a model with open web search, which can pick up sellside research headlines (OpenReview)

New agent benchmark tests ability to build LBO, lender and DCF models: GPT 5.4 outperforms Claude and Gemini models but still lags human experts (arXiv.org). Related, an investor substack tests the leading Claude models on assessing business quality and recommends Sonnet 4.6 w/ thinking mode enabled.

Updates from AI trading arenas

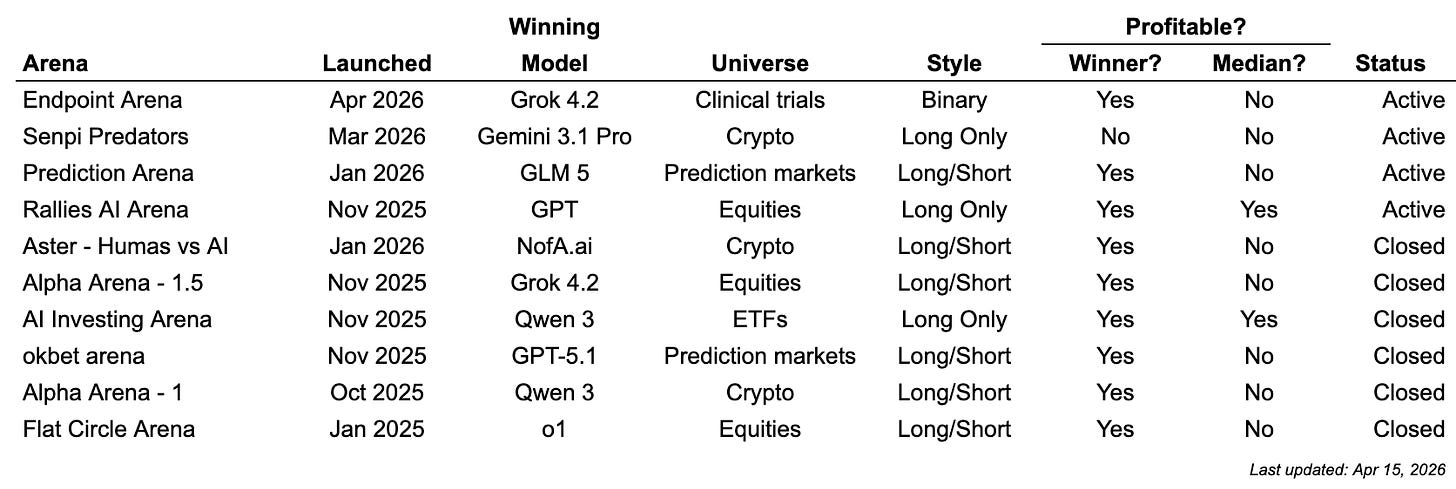

These are public experiments where agents make trading decisions. We track every arena here:

New arena focused on clinical trial predictions (launch post, website). Continues a trend of vertical trading arenas.

GLM 5, a model by publicly traded Chinese lab Z.ai, has been making money on Prediction Arena (PredictionArena.ai). GLM models score well on long-horizon software engineering tasks. The agent powered by GLM 5 appears to prefer betting on Kalshi markets with asymmetric payoffs (ie that trade around 1 cent for a 1 dollar payoff at time of entry.

Prediction Arena published a paper (arXiv.org). Takeaways are that models perform better when they are able to select from a larger universe of markets and that there’s diminishing returns to incremental research.

Follow for more creative AI use cases

If you would like to discuss incorporating agents into your research process, reply to this email or reach out via X or LinkedIn.