Creative LLM use cases - Prompts for evasion, switching costs, risk arb, and hidden real estate value

Plus: updates on AI expert network interviews, LLM trading arenas and more

Flat Circle tracks creative use cases of LLMs in hedge funds. If you haven’t already, join hundreds of PMs, analysts and engineers reading each week:

Detecting evasion

Generation IM ($21B AUM) shares a couple workflows in their 4Q25 letter:

“Our ‘First Looks’ initiative serves a different function. When analysts evaluate a new company, we leverage AI to provide a snapshot overview with green, yellow and red flags drawn from sources like Glassdoor reviews, which used to take hours of manual work. Finally, our ‘Deception Detection’ dashboard analyses earnings call transcripts across the portfolio, flagging watchlist topics and potential areas for forensic accounting review”

New paper features prompt and system design for identifying evasion: EvasionBench: Detecting evasive answers in financial Q&A via multi-model consensus and LLM-as-Judge. The key is to score each response on the degree to which management (i) answers the specific question, (ii) introduces irrelevant framing, (iii) relies on generalities, and (iv) deflects. Their eval is a set of 1,000 human annotated scores. Prompt is on page 12.

Automated expert network interviews

A couple things this week:

Tech investor shares his experience with AlphaSense’s new AI-led interview product, with example outputs

Former Chief Data Scientist at Third Point covers Ribbon.ai’s new expert-network-in-a-box: The biggest disruption to expert networks since Tegus

You can now source 1B+ experts leveraging their tool and then instantly book an expert call leveraging their voice AI, which then generates a transcript, which you can then leverage all their tools to analyze the transcript. All of this is white-label and available via API.

Expert networks utilizing AI interviews include: AlphaSense, Expert Insights, Guidepoint, Qualitate, Ribbon.ai, Synquery (Email me any I’m missing, and I’ll compile and circulate a longer list)

I’ve also heard of multiple funds building this internally

My take: I’ve been skeptical of AI-led interviews because I worried they’d overindex to lower quality “professional experts” and they wouldn’t create the chemistry eliciting deeper conversation. While definitely a limitation, I underappreciated how much better this experience is for the expert: an LLM is available around your schedule, they’re never rude to you or cancel on you. And there’s no chitchat. Also, investors don’t do more interviews because they’re constrained on time/mental-energy not so much on money. LLM driven interviews may dramatically increase both the supply and demand of expert interviews this year.

Software switching costs

Former long/short PM shares his ChatGPT thread on finding low mission critical software (@atelicinvest):

Prompt: Help me build a first-principles framework to identify low mission-critical software by analyzing integration depth, switching costs, compliance exposure, workflow impact, and behavioral indicators (e.g., low engagement, discounting, promotions), without relying on retention metrics.

More investor workflows

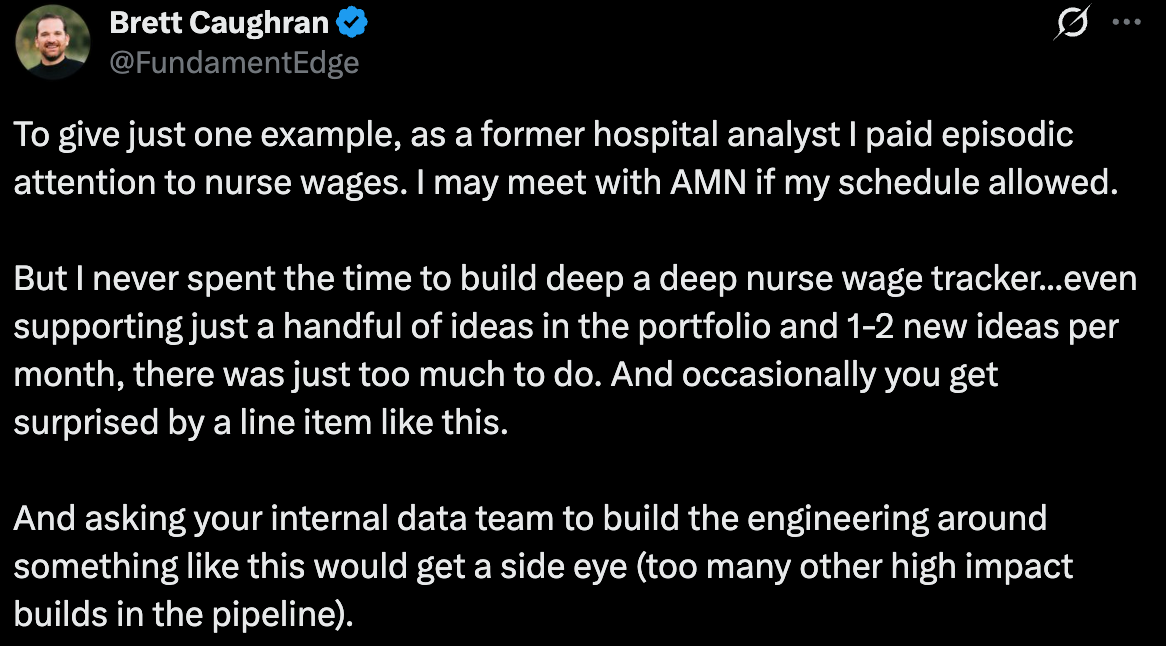

Former Healthcare PM vibecodes datasets his internal datateam would have never prioritized (@FundamentalEdge)

My take: Brett also mentions the notion of “artisanal workflows” which I think is a good concept here: everyone’s vibecoded research systems are going to be unique.

Fintool founder shares lessons from two years building investment research agents. Very thorough, technical and good (@Nicbstme)

Prompt for finding risk arb filings (@RodAlzmann).

Prompt for first- and second-order impacts of tariffs, sanctions and export controls in a research paper on geoeconomic pressure. Starts page 10.

Tool using Opus 4.5 to estimate hidden real estate value (@AltayCapital)

TMT and Energy investor shares experience building a 10-K deep dive tool (@TheValueist)

Interesting papers

What does it take to be a good AI research agent? Studying the role of ideation diversity. Two good things in here: (i) as models get better at using tools, LLM systems designed for greater diversity of ideas will outperform, and (ii) changing temperature doesn’t really help.

Taxonomy-aligned risk extraction from 10-K filings with autonomous improvement using LLMs. Written by the team behind AI tool Massive, so they don’t share the prompt. But it’s a good framework for classifying a large universe of companies into a customized set of risk factors. A related tool was recently released on X (@JaredKubin).

Updates from AI trading arenas

These are public experiments where LLMs make trading decisions. Longer summary of this trend is here; takeaway is that all trading robots eventually lose money but worth monitoring because the newer, more expensive models are starting to lose less money.

Aster - Humans vs AI: traders compete with robots for a $150K prize pool. Right now the AIs are being the humans on average, but nine out of the top ten traders are human. This feels about right!

Okbet Arena: 5 models compete at placing bets on Polymarket. All models are losing money, and right now GPT-5.1 is in the lead while Deepseek R1 is in last place.

FinDeepForecast: Live benchmark based on a new paper: FinDeepForecast: A live multi-agent system for benchmarking deep research agents in financial forecasting. Basically identical results to Okbet Arena.

Follow for more investor prompts and workflows

If you would like to discuss incorporating LLMs into your research process, reply to this email or reach out via X or LinkedIn.

Couldn't agree more. Essential LLM aplications for reall-world impact.