Creative LLM use cases - Needles in a haystack, interviewing yourself, email for agents and OpenClaw

Plus: Which model works best for which workflow?

Flat Circle tracks creative use cases of LLMs in hedge funds. If you haven’t already, join hundreds of PMs, analysts and engineers reading each week:

Which model works best for which workflow?

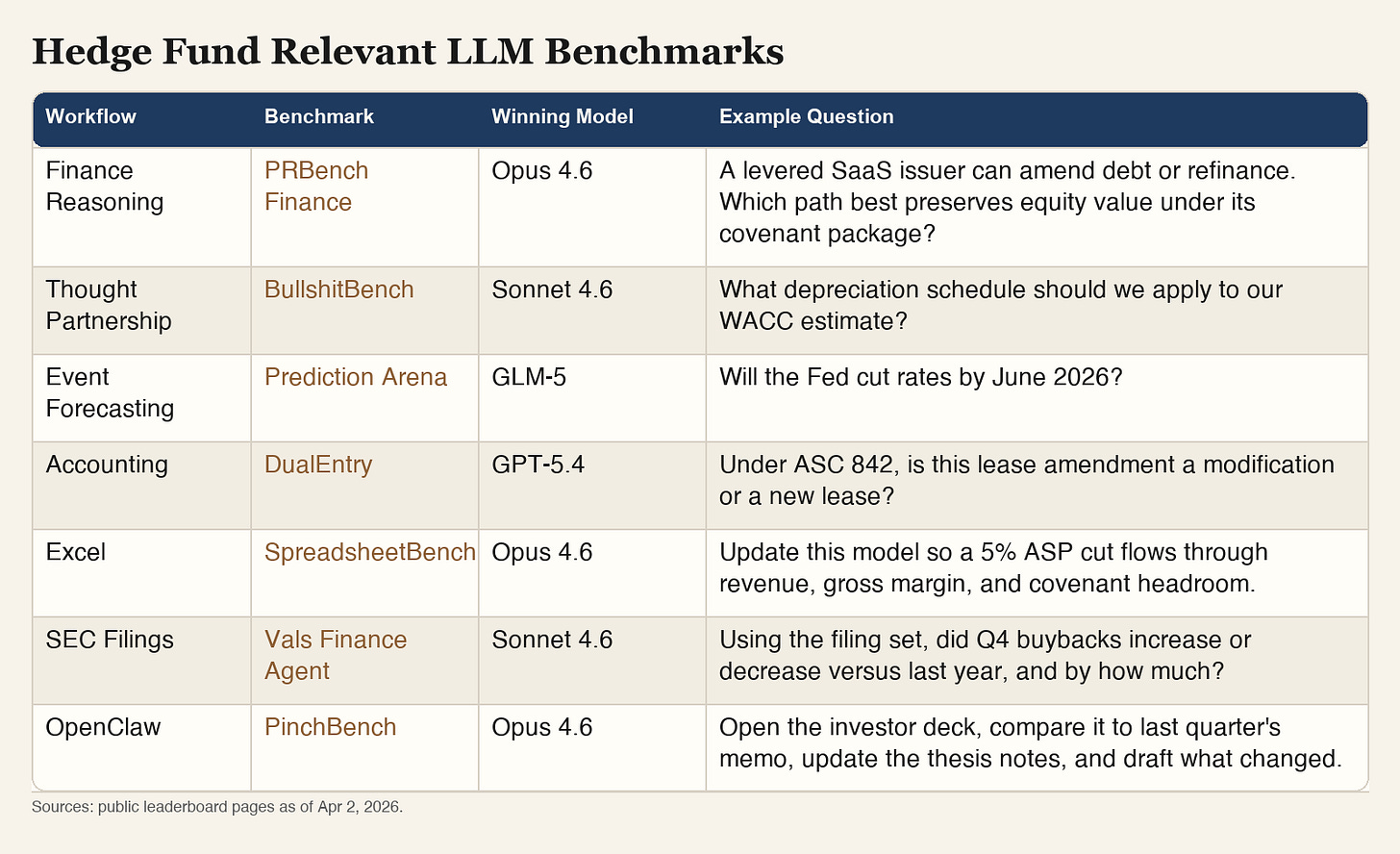

LLM benchmarks score models against real world tasks designed by experts. These popular ones cover various investment research workflows: accounting (DualEntry), excel (SpreadsheetBench), SEC filings (Vals), finance reasoning (PRBench), event forecasting (Prediction Arena), thought partnership (BullshitBench) and OpenClaw (PinchBench):

We’re developing investment research benchmarks for agentic systems (ie not just the models but the prompts, tools, etc), which can drive meaningfully different outcomes. If you’re interested in collaborating, please reach out.

Creative LLM use cases

Prompt and methodology for identifying ‘moving targets’ - flagging whenever management teams change what metrics they highlight (arXiv.org, AI Street)

YC partner releases open source, AI-native email inbox (@agupta, GitHub). Makes it easier to bring internal and external data into your email flow. Alternatively, new YC startup AgentMail is an easy way to give your agents their own email account

Coatue-backed long-only uses AI to condense overnight research into custom podcasts (Advisor Perspectives)

Eve also scours the disclosures of more than 13,000 companies; listens to podcasts; scrutinizes social media posts; summarizes the news; and, each morning, generates a podcast for Kishore to listen to while he drives to work.

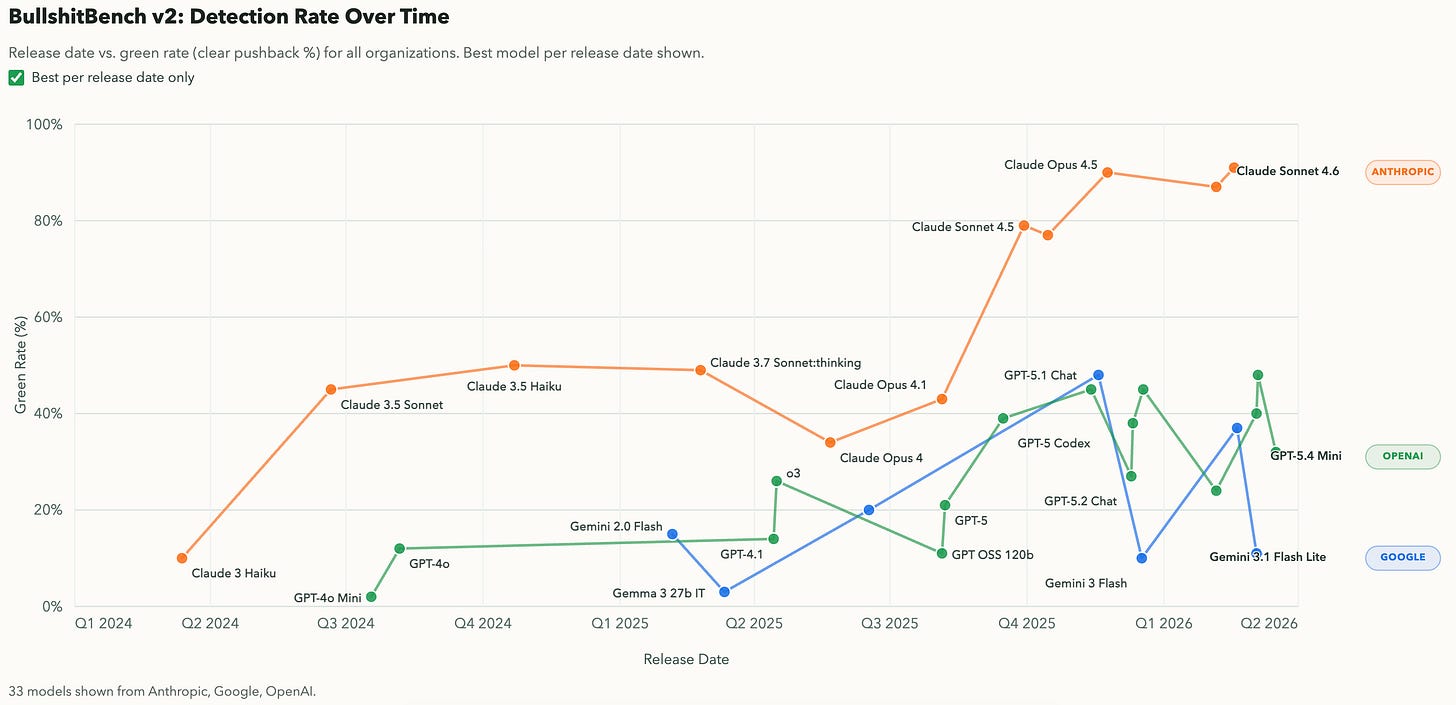

BullshitBench: When using LLM as a thought partner, does it push back when the premise of your question is flawed? (Benchmark, @petergostev, thanks to Mark Ainsworth). Claude Opus 4.6 lets only 2% of flawed questions through, while GPT 5.4 lets 16% through.

Reddit post on using Claude Code to analyze retailers using satellite data (r/ClaudeCode)

Former hedge fund PM shares prompt for Perplexity Computer to create a guidance credibility analysis (@FundamentalEdge)

AllianceBernstein AI head uses LLMs to fill in missing time series data (post)

Researchers frequently incorporate historical data into their analysis, and data may be unavailable for certain time periods—the dreaded broken time series. Rather than throw away the series, analysts can use AI models to create fill-in data that, in the human expert’s judgement, may be sensible given the context.

TMT investor shares favorite LLM use cases (@lfg_cap)

Option scenario pricing

Portfolio optimiser

Factor / thematic correlation / analysis / alerter

Getting from 0 to 95% on new sub sector

Qualitative relative business quality analysis (based on structured questionnaires)

New ideas / needle in haystack (parsing through 1000s of emails and twitter messages) for differentiated / contrarian views on different industries / geopolitics etc

AlphaSense launches custom agents to run prompts on a schedule, and custom AI expert calls to automatically interview a panel of experts (press release)

Interviewing yourself

Several good pieces this week about documenting your personal workflow into prompts and markdown files.

Example prompt to launch voice interview session - turning open ended discussion into detailed instructions (Ben’s Bites)

More interview prompts that extract context from yourself and make your agents more effective (@Shpigford)

Lawyer discusses how he embeds his own personal frameworks into skill files (@zackbshapiro). He also says it’s impossible to infer someone’s process simply by looking at their outputs, says it needs to come directly from the person:

I’ve had people try to reverse-engineer my Claude skills by studying my outputs, using AI to analyze what I produce and reconstruct the instructions that generated it. They never get close...what my skills actually contain is not a description of what the output should look like. It’s a detailed operating procedure for how the output gets created: decision trees, analytical frameworks, sequencing logic, edge-case handling, judgment calls about when to be aggressive and when to hold back. You can’t see any of that by studying the finished product...A finished contract shows you what a great lawyer decided. It doesn’t show you how she decided it, what she considered and rejected, or the order in which she worked through the issues. The process is invisible in the product. My skills encode the process.

Follow for more investor LLM workflows

If you would like to discuss incorporating LLMs into your research process, reply to this email or reach out via X or LinkedIn.